March 23, 2026

Coding Is Dead. Engineering Never Was.

The spec is the product now. Code is a commodity. Here's what that means and what I'm building about it.

This might be a potential hot take. If you are easily offended you might not wanna read this post.

Google principal engineer Jaana Dogan publicly admitted in January that Claude Code replicated in one hour what her team spent a year building. A distributed agent orchestration system. Three paragraphs of prompt. One hour. She wasn’t joking. Her words: “this isn’t funny.”

No it isn’t. And most of you still aren’t paying attention.

The signals are screaming

Jensen Huang went on the All-In Podcast last week and said something that should make every engineer sit up straight. If a $500,000 engineer isn’t burning at least $250,000 in tokens per year, he’s “deeply alarmed.” If they only spent $5,000? His exact words: “I will go ape.” He compared engineers not using AI to chip designers saying they’ll just use paper and pencil. When the hosts asked if Nvidia is spending $2 billion on tokens for their engineering team, Huang’s response was “We’re trying to.” Tokens are now a performance metric in Silicon Valley. People are literally asking “how many tokens come with my job?” before they ask about equity.

Let that sink in for a second.

Satya Nadella says 20 to 30% of Microsoft’s code is already AI-generated. Zuckerberg thinks Meta will hit 50% within 12 to 18 months. Microsoft CTO Kevin Scott said on the 20VC podcast that 95% of code will be AI-generated within five years, but humans will still lead authorship and design. Dario Amodei predicted at CFR that 90% of code would be AI-written within months and doubled down at Davos 2026, saying we’re 6 to 12 months from AI handling “most, maybe all” of what software engineers do end to end.

Read that again. The CEOs of the companies building the future of compute are all saying the same thing. Code is becoming a commodity. The thing that matters is what comes before the code.

The spec is the product

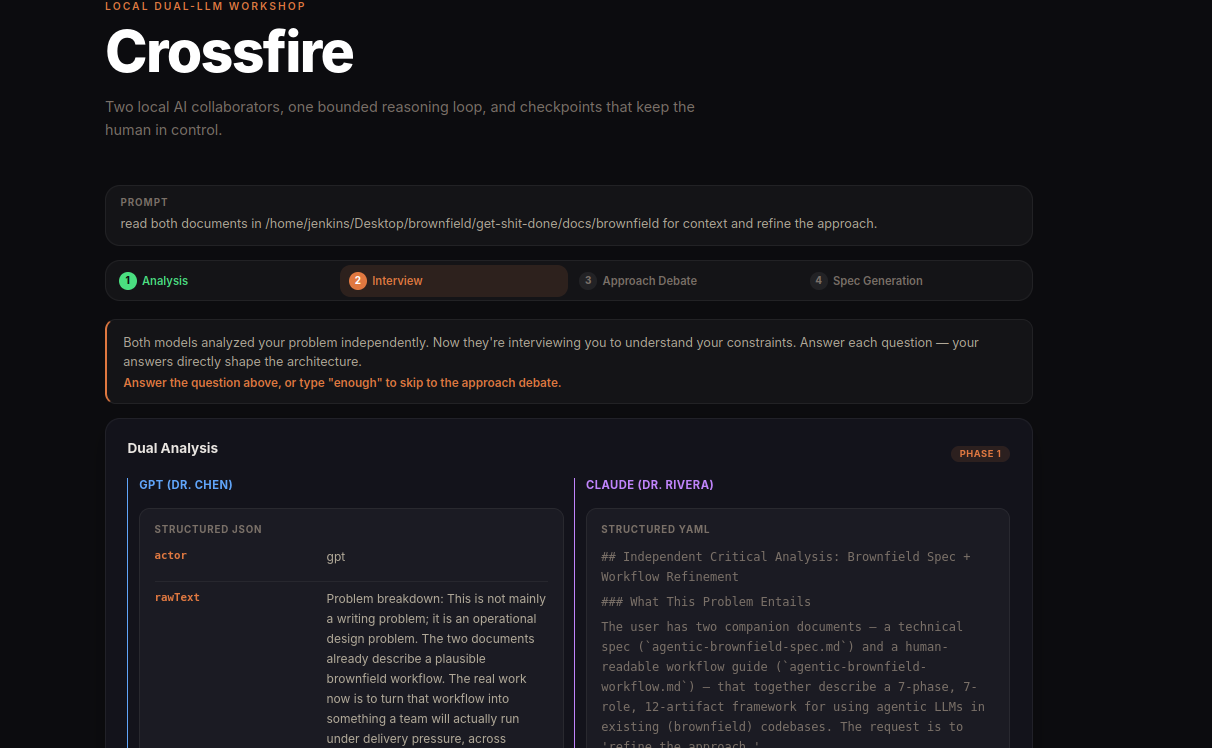

I blogged about this recently with Crossfire. The whole premise of that project is that the spec is the bottleneck, not the code. Once you have a good implementation plan (one that’s been stress-tested, accounts for edge cases, has concrete file paths and TDD task ordering) the actual coding is almost mechanical. Both Claude and Codex are excellent at turning well-defined plans into working code.

The problem? Getting to that well-defined plan. LLMs are people-pleasers by default. Ask one model to spec something and it gives you something coherent and plausible. Looks great on first read. But “coherent and plausible” hides a lot of sins. Unstated assumptions. Architectural decisions that don’t survive reality. Missing failure modes. Scope that quietly ballooned because the model said yes to everything you implied.

That’s why Crossfire uses adversarial debate between Claude and Codex. Two models enter. One spec leaves. The specs coming out of that process are buildable. Not “looks good on a slide” buildable. “Sit down and start writing tests” buildable. 19,000 characters of implementation plan with deterministic serialization rules, 8-step reconnection protocols, acceptance criteria, and risk assessment.

I’m not the only one seeing this

And I wanna be clear about that. Credit where it’s due.

Literally today, Dave Kennedy (@HackingDave) posted a tweet about building a ChatGPT 5.4 plugin for Claude that automatically gets a “second opinion” for PRD/spec/implementation, then submits the finished code to ChatGPT for bug review and security analysis. His conclusion: “Works insanely better having two work together.”

That’s Crossfire. That’s the same idea I built and open-sourced a few days ago. And Dave arrived at it independently because the pattern is obvious once you actually use these tools for real work instead of just talking about them on LinkedIn.

Jesse Vincent’s Superpowers has been doing incredible work on the spec-driven side. A full skills framework that forces your coding agent to brainstorm before it writes, plan before it executes, and TDD before it ships. It’s become one of the most popular Claude Code plugins for a reason: it works. The brainstorm-then-plan-then-implement loop is the same core insight that drives everything I’m building, just approached from a different angle.

GSD (Get Shit Done) by TÂCHES takes this even further with context engineering that solves the “context rot” problem (your AI getting sloppier as conversations get longer). Subagent orchestration, atomic commits per task, wave-based dependency graphs, fresh context windows per execution unit. Their tagline nails it: “No enterprise roleplay bullshit. Just an incredibly effective system for building cool stuff consistently.” 30K+ stars. Trusted by engineers at Amazon, Google, Shopify. The community clearly wants this.

So the ideas are out there. People are building. That’s the good news.

Here’s what frustrates me. Dave, Jesse, the GSD team, and I are all thinking about the same problems, building overlapping solutions, and mostly finding out about each other’s work by accident on Twitter or GitHub trending. There’s no venue for this convergence. No place where the people who are actually doing the hard thinking about AI-first development workflows sit down and compare notes. Instead we have conferences where someone demos the latest tool release to a room full of people who clap politely and then go back to using ChatGPT as a search engine.

The field feels more sporadic and gatekept now than ever, which is ironic given that everyone and their grandma can use AI to build solutions. And that’s exactly the point. The moat is no longer in the solutions. Solutions are becoming trivial. Anyone with a Claude subscription can build an app in an afternoon. The moat is in the ideas. The problems we choose to solve. The architectures we design. The edge cases we anticipate. The failure modes we plan for.

That’s what conferences should be talking about. Not “here’s a new model, look at the benchmarks.” Not “look at this cool tool release.” But “here’s a class of problems nobody’s solving, here’s how I think about it, here’s where I’m wrong, let’s figure it out together.” Ideas. How we can shape the future to be safer, faster, better, and more intelligent (which is ironic, since AI is supposedly the intelligent part).

An open invitation

I hate the term “thought leader.” Makes my skin crawl. But I don’t have a better word for the people who are actually pushing this field forward through practice rather than engagement farming.

So here’s my invitation, openly, to anyone who’s building in this space. Dave. Jesse. The TÂCHES team. Anyone else who’s independently arrived at multi-model adversarial workflows or spec-driven development systems. Anyone who’s thinking about autonomous feedback loops, brownfield conversion, or human-in-the-loop governance for AI-generated code. I wanna hear from you. I wanna compare notes. I wanna argue about where we disagree. And I wanna figure out how we pool these ideas instead of reinventing the same wheel in five different repositories.

I’m a 31-year-old sitting in Portugal building this stuff mostly alone. I don’t have a massive team or a VC-backed startup behind me. What I have is years of offensive security experience, a stubborn belief that the community deserves better tooling, and an inbox that’s open. If you’re working on the same problems, reach out. If you think I’m wrong about something, tell me. The worst thing we can do right now is keep solving the same problems in parallel without talking to each other while the LinkedIn crowd farms engagement off screenshots of Claude output.

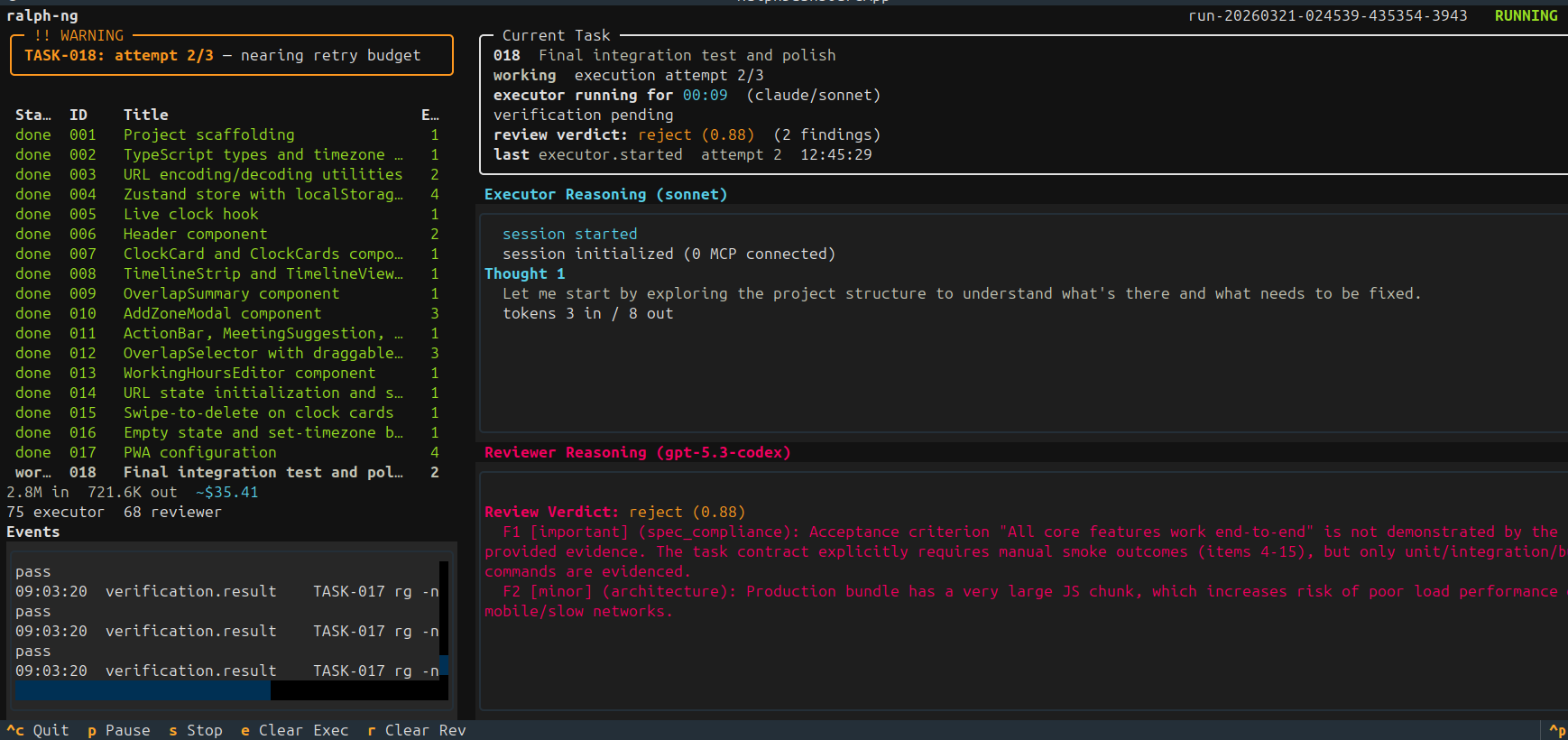

Ralph-NG: adversarial loops as first-class citizens

If you’ve been following my work, you’ve seen the Ralph workflow. Recursive AI-driven development loops with human checkpoints. Ralph worked. But Ralph was v1 thinking applied to a v2 problem.

Ralph-NG is the next generation. I’m alpha testing it right now, prior to open source release.

The core difference: adversarial feedback loops aren’t bolted on anymore. They’re baked into the orchestration layer from day one. Every planning phase, every spec review, every implementation checkpoint runs through structured adversarial validation. Not “hey Claude, does this look good?” (which gets you a polite yes and a pat on the back). Actual adversarial prompting where a second model is explicitly incentivized to tear the first model’s work apart.

Think of it as Crossfire’s debate engine generalized into a full development lifecycle. Planning. Implementation. Review. All of it with adversarial tension built in. The human is the orchestrator, the decision maker, the one who says “ship it” or “go deeper.” Not the one typing semicolons.

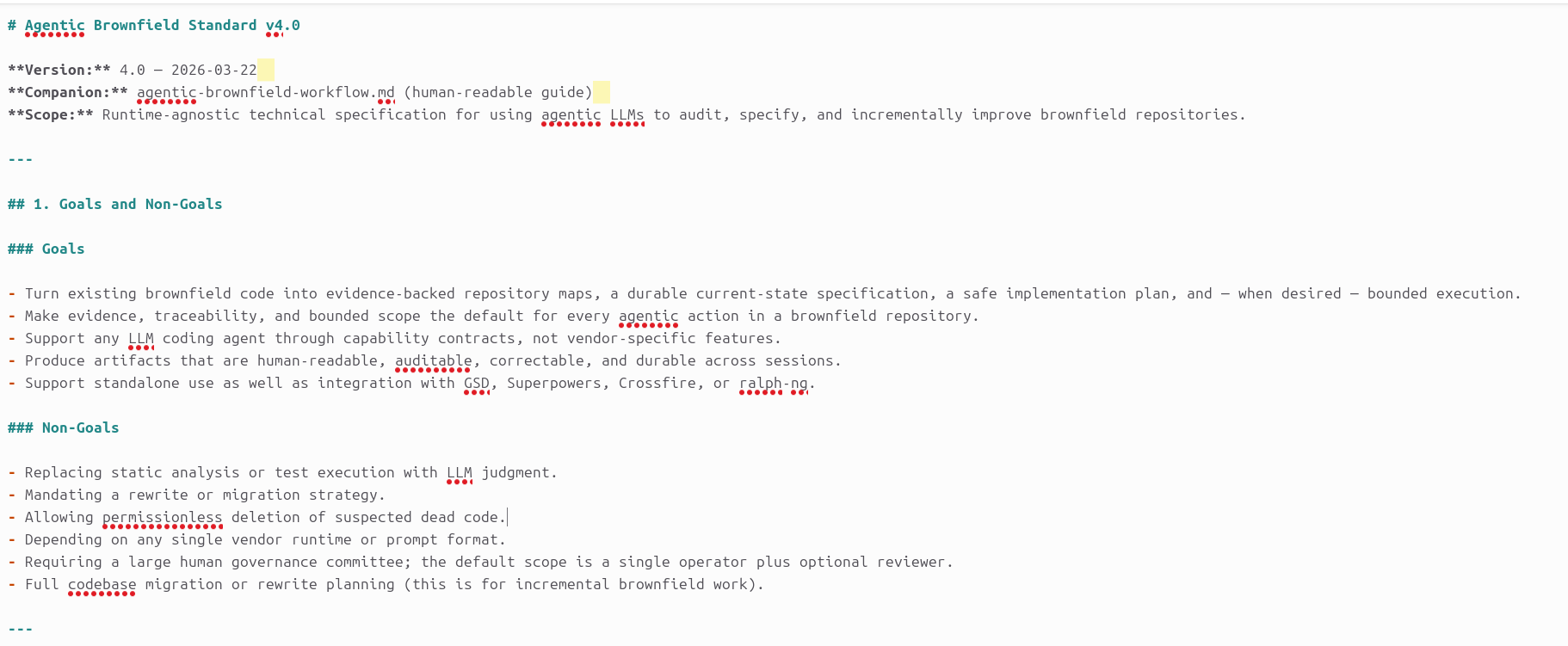

Brownfield conversion: the unsexy problem nobody’s solving

Here’s the thing that keeps me up at night. Everyone’s excited about greenfield AI-first projects. Cool. What about the other 99% of software that already exists?

I’m actively researching brownfield conversion. Taking existing codebases (human-written, AI-written, or the increasingly common Frankenstein mix of both) and making them AI-friendly. Identifying sloppy code. Finding misaligned specs (if specs even exist, which, let’s be real, they usually don’t). Flagging the parts where the original developer said “I’ll fix this later” and never came back.

This is the unsexy work. Nobody’s writing breathless blogposts about cleaning up a 2019 Django monolith. But it’s where the real value is, because most companies aren’t starting from scratch. They have legacy systems that need to coexist with AI-first workflows. And right now, feeding a messy codebase to an AI agent is a great way to get confidently wrong output at high speed.

My goal is tooling that can analyze an existing codebase, map it against whatever spec or architectural documentation exists (or should exist), identify the gaps, and produce a remediation plan that an AI coding agent can actually execute on. Brownfield to greenfield-quality, incrementally, with human oversight at every decision point.

Human in the loop, not human as the executor

This is the part where I need people to rewire their brains.

For 50 years, being a software engineer meant you typed code. You were the executor. The machine did what you told it to do, character by character, line by line. Your value was in the typing. In knowing the syntax. In remembering which flag to pass to that one CLI tool.

That era is ending. Fast.

The new model is human in the loop. You define the architecture. You identify the edge cases. You write the definition of done. You design the taskings. You set up the adversarial validation. You review, you approve, you reject. But you don’t type the code. The AI types the code. Often better than you would have, and in a fraction of the time.

Kevin Scott at Microsoft said the quiet part out loud: “Some of the conversations I have with my people right now are just so goddamn boring.” He’s looking for engineers who think about the full breadth of human understanding, how social systems work, how large groups of humans behave. Not people who can recite JavaScript APIs from memory.

Nadella himself said it: “You can’t just come in and say, ‘I’m smart. I have a bunch of ideas, but I don’t know exactly what to do.’ No, you have to know what to do when it is ambiguous, when it is uncertain.”

That’s engineering. That’s the job now. Clarity under ambiguity. Decisions under uncertainty. The code writes itself.

The moat

Let me tell you where the actual competitive advantage lives in 2026, because it’s not where most people think.

Adversarial prompting. Not “please write me a function.” Structured debate. Multi-model validation. Prompt architectures that force AI systems to stress-test their own output before a human ever sees it. If your AI workflow is “ask, receive, ship” you’re building on sand.

Agent-to-agent orchestration. A2A. Your agents need to talk to each other, critique each other, hand off work with context preserved. Single-agent workflows are the new single-threaded applications. They work for toy problems. They fall apart at scale.

Human-in-the-loop governance. Not rubber stamping. Real decision-making at real checkpoints. The human who can look at an AI-generated implementation plan and say “this misses the failure mode when the database connection drops during a write” is worth more than the human who can implement the connection handling by hand.

Spec engineering. Producing clear specs. Clear implementation plans. Nothing left to interpretation. No ambiguity for the AI to fill with hallucinated assumptions. This is the skill. This is the craft. The rest is mechanical.

Coding is becoming irrelevant. Engineering is where it’s at. Orchestration. Monitoring. Autonomous feedback loops. This is what we’re solving in 2026. Everything else is noise.

Why I’m building this for free

Part of my mission has always been helping secure the world. I teach SEC565 and SEC699 at SANS. I run Offensive Guardian, a security consultancy built around long-term retainer partnerships where I personally lead every engagement (red team, purple team, adversary simulation, vCISO advisory). I’ve spent years breaking into environments and helping defend them. And one thing that terrifies me about the AI shift is that the security implications are massive and most people aren’t keeping up.

AI-generated code ships faster. It also ships with AI-generated vulnerabilities, at AI-generated scale. If you think your AppSec program was struggling to keep up with human developers, wait until every engineer on your team is producing 10x the code with half the understanding of what it actually does. The need for adversarial testing (the red team kind, not just the prompt kind) isn’t going away. It’s getting more urgent by the month.

That’s part of why I’m giving the development tooling away. Crossfire is open source on GitHub. Ralph-NG will be open source. The brownfield analysis tooling will be open source. The blogposts (including this one) are free.

The alternative is a world where only well-funded companies have access to proper AI-first development workflows, while everyone else is either vibe-coding slop or still writing everything by hand. Both paths lead to insecure software. Both paths lead to getting left behind. The community deserves better tools. Not paywalled SaaS platforms charging per seat. Actual tools, built by someone who understands what “code quality” means when an attacker is reading your source.

And if you’re an organization that wants a security partner who understands both the offensive and the AI-first development side of this equation, that’s what Offensive Guardian is for. I embed into your security journey long-term. Same person, building context over time, not a rotating cast of consultants who need to be re-onboarded every quarter.

Wake up

I know this is blunt. I know some of you are gonna read this and feel defensive about your vim config and your hand-rolled build scripts. I get it. I was there too, not that long ago.

But the numbers don’t care about your feelings. A Google principal engineer admitted an AI did in an hour what her team spent a year on. Jensen Huang is telling his $500K engineers to burn $250K in tokens or he’s gonna lose his mind. Dario Amodei is predicting AI handles most coding end to end within months. Microsoft’s CTO is bored by engineers who only know how to code. Dave Kennedy is independently building the same multi-model adversarial workflows I’ve been blogging about. Jesse Vincent’s Superpowers and the GSD framework have 30K+ stars because engineers are hungry for spec-driven workflows. The pattern is everywhere if you look.

Adapt or die is dramatic. But adapt or become irrelevant? That’s just Tuesday in 2026.

The people who figure out spec-driven development, adversarial validation, multi-agent orchestration, and human-in-the-loop governance are gonna eat everyone else’s lunch. The people who are still arguing about tabs vs spaces while AI writes their entire codebase for them are gonna look up one day and wonder what happened.

Start building AI-first systems. Start thinking about the spec as the product. Start using adversarial workflows to catch the mistakes your single-model copilot is too polite to mention. Start treating human oversight as the skill, not human execution.

The tools are here. Some of them I built. Some of them I’m building right now. All of them are free.

You just have to use them.

One last thing

And look, while I’m putting myself out there… if anyone from xAI, OpenAI, Anthropic, Nvidia, or any organization working on the future of AI-assisted development is reading this and thinks “this guy might be useful”… my DMs are very much open. I’ve been building adversarial AI workflows, teaching offensive security at SANS, and thinking about the intersection of AI and security for years. I’d love the opportunity to do this at a scale where it actually moves the needle globally instead of just from my apartment in Lisbon. So if Elon, Jensen, Sam, or Dario happen to be reading this (a man can dream), you know where to find me. Worst case, you get a motivated 31-year-old with strong opinions about spec engineering. Best case, we change how the world builds software. I’ll take those odds.